I was a bit late to the game on Jonathan Harvey, who has now been galavanting through stars and galaxies since 2012 (probably skipping rocks across the milky way with Karlheinz, who I understand consistently skips to 13, counting backwards whilst ritually removing his nose, ears, and fingers… and this, sweetie, is where babies come from). I’d heard the name, but little more than that until about four years ago, when my colleague Louis Chiappetta introduced me to Harvey’s Bird Concerto with Pianosong owing to my interest in Messiaen’s avian output. Since then I’ve found myself profoundly interested in Harvey’s music (if you are a breathing human with a beating heart, this is not hard to do), something I’m grateful to have happened in my musical life. I’ve only really played three pieces of his (I am currently learning the entirety of his piano output, which at 45 minutes total is relatively slight), first one piece he’s probably best known for, Tombeau de Messiaen, an 8 minute romp for piano and electronics written in 1992 as a memorial to the eponymous French composer; Run Before Lightning, a fiendish 2004 outburst of hot condensate and blurred fingers for flute and piano; and now Bird Concerto with Pianosong, a 2001 piano concerto (of sorts) for sinfonietta and electronics. To say I performed the latter at Cornell last April with Ensemble X and Timothy Weiss conducting would be to assume I had ‘merely’ survived the rodeo bull test of concerto pianism. But this piece, in its current extant form, cannot ‘merely’ happen without a ton of DIY and unintended high-stakes team building exercises. And I would say that’s a shame, because Bird Concerto is an indisputable masterpiece and should be getting play everywhere, except that my now overriding impression of Harvey’s work, both from reading in the wake of his interests, playing some of his music, and really getting under the hood of this particular piece, is that his imagination thrived on this borderland of material and immaterial. As such, the unanticipated restorational work needed to make this piece happen now seems a puckishly poetic message from the composer as he traverses the stars. A bit like Debussy, a clear articulation of ambiguity is the musical goal, but any investigation towards the purpose or motivation behind particular musical decisions seems to run circles around the same big signpost: “pleasure is the law.”

The obsessive performer whines, “Well, certainly there is craft! How else could you have constructed something so gloriously unified!?”

The master of mystic arts calmly replies, “Science can reduce to common unit: ‘sample’ ; and so connect : built net of that unit hybrid forms. The semantics, deeper meaning, can be changed (with imagination) otherwise it remains reductionist and brittle. New Insights, new connections, formed between Nature and Culture.”❁ [any time I reference material, like this, from Harvey’s sketches held at the Sacher Stiftung in Basel, I will indicate with this mandala-like symbol. My deepest gratitude to the Sacher foundation, and in particular Simon Obert, who manages the Harvey collection in addition to 30+ other composers.]

In short, the electronics provided by the publisher were not really usable (I will explain what this means later on), and as this performance was a relatively low-budget university affair, we could not afford to, say, hire and fly out a specialist from IRCAM, or even dedicate someone to being solely responsible for the electronics. Or rather, that someone was me, since this piece is the subject of my dissertation, and I do a fair amount of work with electronics on the side. Working in tandem with the amazing Abby Aresty from Oberlin, where Tim Weiss was conducting the piece (with Ursula Oppins on piano) just a week before our Cornell performance, we managed to make the piece work literally just under the wire. This is to say, our performance was, for all intents and purposes, the sound check. That process is the subject of this blog post, and until the materials for this piece are updated, hopefully this serves as useful documentation for those interested in performing an early masterwork of 21st century music.

First, a word about Harvey for those who aren’t familiar with his work. He was born in 1939 in Sutton Coldfield, UK, just outside Birmingham, and spent much of his childhood as a church chorister amidst the smells and bells of high Anglican musical practice, often singing in two services a day. His father, Gerald Harvey, was an amateur composer, a sort of “English pastoral mystic” as the son puts it, and it is within these deeply musical contexts JH recalls having a series of epiphanic experiences in his chorister days: feeling subsumed by the otherworldly power of the sacred music he was performing, having occultist visions of ghosts and other spirit presences and life after death, and often stealing into the St. Michael’s chapel and improvising on the organ. He “lost religion” later on during his years at Cambridge, but continued to have a love for the rituals and their encompassing mystery, and essentially decided that through his burgeoning desire be a composer he would pursue music fixated on the mystical experiences universal to most religions. The danger of falling into a kind of “warm bath” of New Age-ist banal, escapist happiness was not lost on him, and so his music, if it may be very bluntly summed up, sought an honest depiction of humanity’s Sisyphean struggle of attaining transcendent states of consciousness. That is, filled with noise, distractions, interruptions, anxiety, fear, and only brief glimpses of a Beyond that could as quickly disappear under a flash of dark spiritual retrogradation. He eventually became a practicing Buddhist, and many of his later works deal directly with Buddhist texts and teachings. …Towards a Pure Land, Body Mandala, and Speakings are a trilogy of orchestral works written (apparently at mind-blowing speed) between 2005-8, all based on Buddhist thoughts on the purification of mind, body, and speech. Similarly, Wagner Dream is a 2006 opera based on a semi-fictional account of Wagner suffering a heart attack in Venice and receiving a lesson/vision from the Buddha as he dies. This was somehow written at the same time as the aforementioned trilogy. JH saw Buddhism (at least Vajrayana Buddhism, which is what he studied and practiced) as a near perfect distillation of the spiritual pursuits of most other religions, and as such the Buddhist themes in his works serve as tools for seeking a universalist vision of humanism, which you could say is rather a continuing theme of the Enlightenment (itself heavily influenced by Eastern philosophy). His music is at times blindingly ecstatic, a bit as though Scriabin had lived through the transistor age, but not without a hint of the irreverent 1960s wit that made artists like Cage and Kagel masterful musicians (a fact that I’m afraid is all too often lost on modern-day denizens of post-modern thought, but not this incredible film).

JH’s use of electronics to augment his music, which began quite early on in the 1960s and represents a large body of his output, was a favorite tool for communicating the impermanence of reality taught in Buddhism (‘Emptiness’, or Śūnyatā), since with electronic sounds if one can trick the ear into believing the worldly impossible, the mind can be moved towards the otherworldly possible. There is so much more to say about the spiritual side of his music, which has in many ways cast him as the Buddhist-tinged successor to über-Catholic Olivier Messiaen (who, unlike JH, rejected the “mystical” label for his own work), and this is undoubtedly what JH most foregrounded about his artistic outlook. But for now, a bit about Bird Concerto…

JH’s use of electronics to augment his music, which began quite early on in the 1960s and represents a large body of his output, was a favorite tool for communicating the impermanence of reality taught in Buddhism (‘Emptiness’, or Śūnyatā), since with electronic sounds if one can trick the ear into believing the worldly impossible, the mind can be moved towards the otherworldly possible. There is so much more to say about the spiritual side of his music, which has in many ways cast him as the Buddhist-tinged successor to über-Catholic Olivier Messiaen (who, unlike JH, rejected the “mystical” label for his own work), and this is undoubtedly what JH most foregrounded about his artistic outlook. But for now, a bit about Bird Concerto…

Bird Concerto with Pianosong was written for English pianist Joanna MacGregor, and premiered at the Cheltenham Music Festival by Martyn Brabbins and Sinfonia 21 in November of 2001. Harvey seems to have conceived of the piece as a concerto for piano and sampler (he refers to this in his work diary as early as November 1999❁), but says the Californian birds he heard while teaching at Stanford went a long way to reorienting his thinking on the piece from being a “Piano Concerto” to a “Bird Concerto.” In his program notes he writes, “‘real’ birdsong was to be stretched seamlessly all the way to human proportions—resulting in giant birds—so that a contact between worlds is made. When I started to transpose them and slow them down to our natural speeds of perception they began to reveal level after level of ornamentation – baroque curlicues and oriental arabesques.”

The birdsongs❁ used for this piece are, in fact, not ‘real’ birds, but synthetic transcriptions ‘drawn’ by hand by Bill Schottstaedt, one of Harvey’s colleagues at Stanford. In an email to Harvey, Schottstaedt wrote:

I used a bird guide (the “Golden” guide to birds) and a couple of articles from Cornell’s Ornithology lab; these had stamp-sized sonograms which I transcribed by hand (using a magnifying glass) into CLM (actually Mus10) envelopes, then tidied some of them up by ear –– there are regional differences in bird songs. It was a lot of fun, but was a serious strain on the eyes. The result managed to fool (or at least interest) both the AI lab cat (named Marathon for her yowling) and my pet cat, so I figured the experts were satisfied. I very pleased you’ve found them useful!❁

These synthetic songs are loaded onto a sampler, originally a massive rack-mounted Akai unit, and the pianist triggers these samples from a Yamaha synthesizer (whose own sounds are often subtly employed) placed on top of the piano. From several of the most interesting birdsongs, unique scales were extracted and expanded into ‘spaces’❁, collections of pitches representing repeating patterns that acted as, to use his word, “sieves” through which his melodic and gestural inclinations would be automatically organized (in other words, the music is ‘magnetized’ to a unique constellation of pitches from low to high frequencies). On the ‘human’ end of the spectrum, 15 instrumental ‘objects’ are composed integrating various fragments of these birdsongs––in his sketches he writes, “Just as birds have ‘their songs’, so do we,” and further hints at quotations both of his own music and other composers❁. JH compositionally treats these ‘objects’ as sampled sounds in and of themselves, and in so doing the human and avian worlds are entangled––bird ‘objects’ are slowed down to the human realm of hearing, their pitches and rhythms extracted and distributed into music played by the ensemble, the human ‘objects’ elevated into the avian realm through their relationship to specific birdsongs.

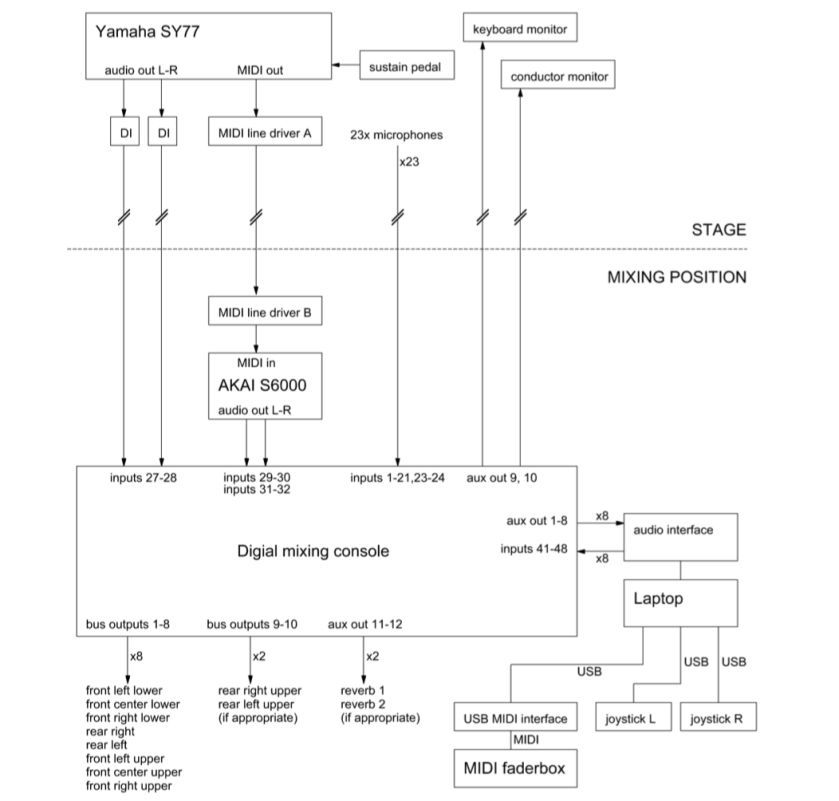

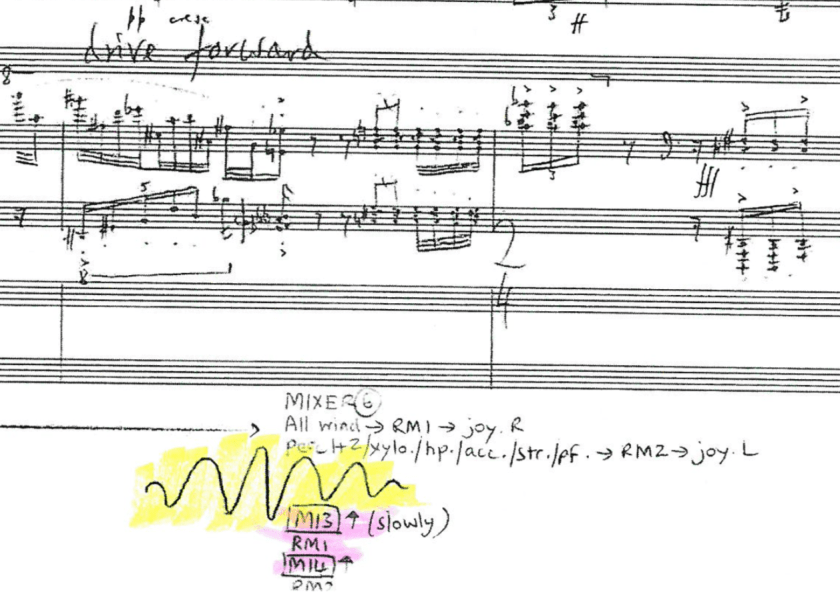

But that’s just what’s happening on stage between the pianist/‘samplist’ and the rest of the ensemble. Every instrument is miked, translating to ca. 24 microphones on stage, each of which is fed to a mixing board (attended by one of three ‘diffusionists’) out in the audience which mixes the incoming signals into 8 ‘groups,’ the constituent instruments of which change with each section of the piece. These 8 groups, whose respective levels are controlled by the first diffusionist at the board, are fed into a computer program (Max/MSP) for processing. The incoming ‘humansongs’ are ring modulated (a sound made famous primarily on a classic British TV show), a signal processing mechanism similar to the way radio signals are transmitted, which, believe it or not, is related to the physiological mechanism by which birds produce sound. Remember that Yamaha synth mentioned earlier? Yamaha’s synthesizers use a form of Frequency Modulation synthesis, effectively the same phenomenon used by birds, developed by the American composer John Chowning at Stanford. Human/avian entanglement in all facets of this piece, both within and without.

The ring modulated instrument groups, now ‘birdified,’ and whose levels in the software are controlled by the second diffusionist, are fed into a part of the computer program which diffuses them throughout a circle of 8 loudspeakers positioned around the audience. The instruments’ apparent position within this circle are controlled by the third diffusionist with two joysticks, which, just visually speaking, has to be the most cool-looking job in the whole piece. This third diffusionist’s joystick gestures ‘flies’ the instrumental sounds around the room, an idea Harvey seems to have been inspired to explore from the writings of French philosopher Gaston Bachelard, himself interested in the sensation of ‘flight’ and motion as the foundation of imagination itself:

A psychology of the imagination that is concerned only with the structure of images ignores an essential and obvious characteristic that everyone recognizes: the mobility of images. Structure and mobility are opposites—in the realm of imagination as in so many others. It is easier to describe forms than motion, which is why psychology has begun with forms. Motion, however, is the more important. In a truly complete psychology, imagination is primarily a kind of spiritual mobility of the greatest, liveliest, and most exhilarating kind. To study a particular image, then, we must also investigate its mobility, productivity, and life. [Bachelard, Air and Dreams, 2]

The jobs of all three diffusionists are marked up at the very bottom of the score, the joystick ‘gestures’ in Harvey’s distinctive chicken-scratch, the rest seemingly marked up by a considerably less feverish hand.

I had gone through and color-coded three separate scores for the three diffusionists (for our concert, an all-star trio of composers Kevin Ernste, Piyawat Louilarpprasert, and Christopher Stark), only to soon realize that Faber had sent us only old editions of the score from 2001 which were missing more than half the crucial markings for the diffusionists. This being only a few days before the concert, I went to the library, copyright infringement be damned, literally cut the school’s copy of Bird Concerto from its bindings, and ran it through a copier. I have subsequently apologized to the librarian, who I think forgave me, and as for Faber…

In total, what is needed to perform this piece is the following:

- 8 large speakers (≥1000 watts each) and stands (plus 2 subwoofers, if available)

- 2 stage monitors for pianist and conductor

- 23-24 microphones and stands

- a digital mixing board with at least 34 inputs (22-23 preamps), 16 outputs, and an extremely flexible routing architecture

- a Yamaha SY77 or TG77 synthesizer

- an Akai sampler for the birdsongs

- an interface between the computer and the mixing board (for 8 ‘groups’)

- two joysticks

- 16 channel MIDI fader

- a computer with enough processing power to run the diffusion software

- approximately 40 cables long enough to get where they need to go (and extras, since cables tend to die when they are needed most)

If that seems like a dizzying number of things, then yes, you are definitely a human being. This is probably “Tuesday” for the technicians who travel with rock bands or work on television sets, but for modest Classical venues, where electronics or amplification of any kind is looked upon at best as an ‘other,’ at worst a kind of moral failing, this is a challenge. This is, in fact, something Harvey found fault with in the Classical music world. Our venue at Cornell, Barnes Hall, which is a charming converted 19th century chapel, is NOT equipped for any of this. Literally every electronic music concert is a DIY extravaganza, and every piece of gear has to be schlepped over from the Cornell Electroacoustic Music Center, which is itself basically a classroom, a closet, and a few makeshift booths. This piece basically emptied the CEMC’s mic locker, I had to supplement with my own mics (including a pair of DIY binaurals), and we borrowed from the Theater Arts department and one of the visiting composers.

Furthermore, Cornell does not own a digital mixing board. The reason this is crucial for the piece is the fact that the routings for each of the 25 incoming signals are fluid, meaning that at about a dozen points in the piece the destination(s) for each input has to change. This can be done on a digital mixing board, where these routings are preset, saved, and can be shifted instantaneously at the click of a button. This cannot be done on an analog board, unless a small army of tiny hands could be on 25 knobs at once, all coordinated to move simultaneously.

Furthermore, not all digital mixing boards are created equal. Many digital boards, while loaded with glistening lights, fancy FX, and claims of that-that-and-the-other gizmo-gadget, are fundamentally rooted in the assumption that all anyone would ever want to do with such a piece of gear is mix a band at a local Christian revival. Anything more complicated than that either requires ‘hacking’ the board, or migrating into a much higher price bracket, which begins to appear exponential. The boards recommended by the authors of the technical documentation for Bird Concerto are such beasts, but older units, new when the piece was written 18 years ago, beyond long-in-the-tooth now, and most don’t even have a way to connect to a computer, a real no-brainer these days, necessitating a separate external interface simply to get sounds between mixer and laptop. Others were so large (like this DigiCo board) there would have been no way to safely fit them into the space without violating some sort of fire code. This is assuming they can be rented, borrowed, or (God willing) purchased on a university budget, which itself exists in a perpetual state of quantum entanglement of way more and way less than you expect, depending on the cycle of the moon, how many undeclared freshmen are in a 100 yard radius, and if such a project is politically useful to a faculty member.

But as I flip through Harvey’s sketches, I come across these beautiful lines of scribbled thoughts, free-associations on the yet-unformed piece as it flits about the aviary of his imagination:

PARADISE GARDEN … Wonderful magic calm! … Paradise garden meditation the heart and soul of the piece ❁

Remember, nobody said ‘wonderful magic calm’ would be easy, least of all Harvey. After all, his take on the human condition is one of constant struggle towards spiritual transcendence. I have been through the electronics of this piece with a fine tooth comb, designing and implementing patches that we used for our performance (I even bought a second hand Yamaha TG77 for this piece, which is awesome and I will write about at some point), and my brain still gets twisted up thinking about it. I imagine that’s how he saw these tools, and I get the sense he wanted it to stay that way, to retain some of its beautiful mystery, tornadic confusion, unknowability. The English composer Julian Anderson told me about a workshop he attended as a graduate student during which time attendees would get to work with Harvey on composition with electronics. Harvey, by this point well known for his electro-acoustic work, announced that the technician was not able to attend the first day, and singled out Anderson to help him with the equipment. Anderson, somewhat peeved that he, an attendee, was now tasked with playing technician for the master, resisted somewhat, only to acquiesce when it became evident Harvey didn’t know how to turn anything on. John Harbison, who knew Harvey very briefly when they were at Princeton, separately said Harvey told him electronics were a kind of literal magic. I mean, he’s right, isn’t he? Think about the understanding of quantum physics that was required to make the first transistor turn on and off, then think about the trillions of those things in a single smartphone, a single chip the size of a fingernail clipping, and I’m willing to believe we’re all at Hogwarts.

The business of all this electronic trouble is not a fault of creation, it is simply a part of that struggle… or used to be, anyway. Is it really appropriate for someone who grew up in the age of digital audio and smartphones to assume the attitude of someone who grew up in the age of reel-to-reel tape? What kind of insights are afforded by decoupling yourself from the nuts and bolts of creation if you have grown up in an age of technological demystification? Can technology really represent spiritual transcendence, or is non-technology the new spiritual quest? These are questions of performance practice unfolding before our eyes, as fascinating as they are disquieting, especially when it comes time to ask the question typically reserved for shawms and harpsichords, “what is the sound of this music?”

I will write about our performance and electronic solutions for Bird Concerto in a subsequent post (warning: it will be wonkish, but if this guy can make a video about the drill on the Mars rover, I am giving myself permission to be a nerd), but for now this pithy reminder, again from Harvey’s sketches for Bird Concerto:

Don’t forget: lead every being to bliss ❁